-

-

-

-

-

-  -

-

-## Installation

-

-```bash

-pip install annotated-doc

-```

-

-Or with `uv`:

-

-```Python

-uv add annotated-doc

-```

-

-## Usage

-

-Import `Doc` and pass a single literal string with the documentation for the specific parameter, class attribute, return type, or variable.

-

-For example, to document a parameter `name` in a function `hi` you could do:

-

-```Python

-from typing import Annotated

-

-from annotated_doc import Doc

-

-def hi(name: Annotated[str, Doc("Who to say hi to")]) -> None:

- print(f"Hi, {name}!")

-```

-

-You can also use it to document class attributes:

-

-```Python

-from typing import Annotated

-

-from annotated_doc import Doc

-

-class User:

- name: Annotated[str, Doc("The user's name")]

- age: Annotated[int, Doc("The user's age")]

-```

-

-The same way, you could document return types and variables, or anything that could have a type annotation with `Annotated`.

-

-## Who Uses This

-

-`annotated-doc` was made for:

-

-* [FastAPI](https://fastapi.tiangolo.com/)

-* [Typer](https://typer.tiangolo.com/)

-* [SQLModel](https://sqlmodel.tiangolo.com/)

-* [Asyncer](https://asyncer.tiangolo.com/)

-

-`annotated-doc` is supported by [griffe-typingdoc](https://github.com/mkdocstrings/griffe-typingdoc), which powers reference documentation like the one in the [FastAPI Reference](https://fastapi.tiangolo.com/reference/).

-

-## Reasons not to use `annotated-doc`

-

-You are already comfortable with one of the existing docstring formats, like:

-

-* Sphinx

-* numpydoc

-* Google

-* Keras

-

-Your team is already comfortable using them.

-

-You prefer having the documentation about parameters all together in a docstring, separated from the code defining them.

-

-You care about a specific set of users, using one specific editor, and that editor already has support for the specific docstring format you use.

-

-## Reasons to use `annotated-doc`

-

-* No micro-syntax to learn for newcomers, it’s **just Python** syntax.

-* **Editing** would be already fully supported by default by any editor (current or future) supporting Python syntax, including syntax errors, syntax highlighting, etc.

-* **Rendering** would be relatively straightforward to implement by static tools (tools that don't need runtime execution), as the information can be extracted from the AST they normally already create.

-* **Deduplication of information**: the name of a parameter would be defined in a single place, not duplicated inside of a docstring.

-* **Elimination** of the possibility of having **inconsistencies** when removing a parameter or class variable and **forgetting to remove** its documentation.

-* **Minimization** of the probability of adding a new parameter or class variable and **forgetting to add its documentation**.

-* **Elimination** of the possibility of having **inconsistencies** between the **name** of a parameter in the **signature** and the name in the docstring when it is renamed.

-* **Access** to the documentation string for each symbol at **runtime**, including existing (older) Python versions.

-* A more formalized way to document other symbols, like type aliases, that could use Annotated.

-* **Support** for apps using FastAPI, Typer and others.

-* **AI Accessibility**: AI tools will have an easier way understanding each parameter as the distance from documentation to parameter is much closer.

-

-## History

-

-I ([@tiangolo](https://github.com/tiangolo)) originally wanted for this to be part of the Python standard library (in [PEP 727](https://peps.python.org/pep-0727/)), but the proposal was withdrawn as there was a fair amount of negative feedback and opposition.

-

-The conclusion was that this was better done as an external effort, in a third-party library.

-

-So, here it is, with a simpler approach, as a third-party library, in a way that can be used by others, starting with FastAPI and friends.

-

-## License

-

-This project is licensed under the terms of the MIT license.

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/RECORD b/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/RECORD

deleted file mode 100644

index 06bbc8d..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/RECORD

+++ /dev/null

@@ -1,11 +0,0 @@

-annotated_doc-0.0.4.dist-info/INSTALLER,sha256=zuuue4knoyJ-UwPPXg8fezS7VCrXJQrAP7zeNuwvFQg,4

-annotated_doc-0.0.4.dist-info/METADATA,sha256=Irm5KJua33dY2qKKAjJ-OhKaVBVIfwFGej_dSe3Z1TU,6566

-annotated_doc-0.0.4.dist-info/RECORD,,

-annotated_doc-0.0.4.dist-info/WHEEL,sha256=9P2ygRxDrTJz3gsagc0Z96ukrxjr-LFBGOgv3AuKlCA,90

-annotated_doc-0.0.4.dist-info/entry_points.txt,sha256=6OYgBcLyFCUgeqLgnvMyOJxPCWzgy7se4rLPKtNonMs,34

-annotated_doc-0.0.4.dist-info/licenses/LICENSE,sha256=__Fwd5pqy_ZavbQFwIfxzuF4ZpHkqWpANFF-SlBKDN8,1086

-annotated_doc/__init__.py,sha256=VuyxxUe80kfEyWnOrCx_Bk8hybo3aKo6RYBlkBBYW8k,52

-annotated_doc/__pycache__/__init__.cpython-312.pyc,,

-annotated_doc/__pycache__/main.cpython-312.pyc,,

-annotated_doc/main.py,sha256=5Zfvxv80SwwLqpRW73AZyZyiM4bWma9QWRbp_cgD20s,1075

-annotated_doc/py.typed,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/WHEEL b/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/WHEEL

deleted file mode 100644

index 045c8ac..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/WHEEL

+++ /dev/null

@@ -1,4 +0,0 @@

-Wheel-Version: 1.0

-Generator: pdm-backend (2.4.5)

-Root-Is-Purelib: true

-Tag: py3-none-any

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/entry_points.txt b/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/entry_points.txt

deleted file mode 100644

index c3ad472..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/entry_points.txt

+++ /dev/null

@@ -1,4 +0,0 @@

-[console_scripts]

-

-[gui_scripts]

-

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/licenses/LICENSE b/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/licenses/LICENSE

deleted file mode 100644

index 7a25446..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/licenses/LICENSE

+++ /dev/null

@@ -1,21 +0,0 @@

-The MIT License (MIT)

-

-Copyright (c) 2025 Sebastián Ramírez

-

-Permission is hereby granted, free of charge, to any person obtaining a copy

-of this software and associated documentation files (the "Software"), to deal

-in the Software without restriction, including without limitation the rights

-to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

-copies of the Software, and to permit persons to whom the Software is

-furnished to do so, subject to the following conditions:

-

-The above copyright notice and this permission notice shall be included in

-all copies or substantial portions of the Software.

-

-THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

-IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

-FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

-AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

-LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

-OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN

-THE SOFTWARE.

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__init__.py b/backend/.venv/lib/python3.12/site-packages/annotated_doc/__init__.py

deleted file mode 100644

index a0152a7..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__init__.py

+++ /dev/null

@@ -1,3 +0,0 @@

-from .main import Doc as Doc

-

-__version__ = "0.0.4"

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/__init__.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/__init__.cpython-312.pyc

deleted file mode 100644

index acef568..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/__init__.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/main.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/main.cpython-312.pyc

deleted file mode 100644

index 3f27881..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/main.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc/main.py b/backend/.venv/lib/python3.12/site-packages/annotated_doc/main.py

deleted file mode 100644

index 7063c59..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc/main.py

+++ /dev/null

@@ -1,36 +0,0 @@

-class Doc:

- """Define the documentation of a type annotation using `Annotated`, to be

- used in class attributes, function and method parameters, return values,

- and variables.

-

- The value should be a positional-only string literal to allow static tools

- like editors and documentation generators to use it.

-

- This complements docstrings.

-

- The string value passed is available in the attribute `documentation`.

-

- Example:

-

- ```Python

- from typing import Annotated

- from annotated_doc import Doc

-

- def hi(name: Annotated[str, Doc("Who to say hi to")]) -> None:

- print(f"Hi, {name}!")

- ```

- """

-

- def __init__(self, documentation: str, /) -> None:

- self.documentation = documentation

-

- def __repr__(self) -> str:

- return f"Doc({self.documentation!r})"

-

- def __hash__(self) -> int:

- return hash(self.documentation)

-

- def __eq__(self, other: object) -> bool:

- if not isinstance(other, Doc):

- return NotImplemented

- return self.documentation == other.documentation

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc/py.typed b/backend/.venv/lib/python3.12/site-packages/annotated_doc/py.typed

deleted file mode 100644

index e69de29..0000000

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/INSTALLER b/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/INSTALLER

deleted file mode 100644

index a1b589e..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/INSTALLER

+++ /dev/null

@@ -1 +0,0 @@

-pip

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/METADATA b/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/METADATA

deleted file mode 100644

index 3ac05cf..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/METADATA

+++ /dev/null

@@ -1,295 +0,0 @@

-Metadata-Version: 2.3

-Name: annotated-types

-Version: 0.7.0

-Summary: Reusable constraint types to use with typing.Annotated

-Project-URL: Homepage, https://github.com/annotated-types/annotated-types

-Project-URL: Source, https://github.com/annotated-types/annotated-types

-Project-URL: Changelog, https://github.com/annotated-types/annotated-types/releases

-Author-email: Adrian Garcia Badaracco <1755071+adriangb@users.noreply.github.com>, Samuel Colvin

-

-

-## Installation

-

-```bash

-pip install annotated-doc

-```

-

-Or with `uv`:

-

-```Python

-uv add annotated-doc

-```

-

-## Usage

-

-Import `Doc` and pass a single literal string with the documentation for the specific parameter, class attribute, return type, or variable.

-

-For example, to document a parameter `name` in a function `hi` you could do:

-

-```Python

-from typing import Annotated

-

-from annotated_doc import Doc

-

-def hi(name: Annotated[str, Doc("Who to say hi to")]) -> None:

- print(f"Hi, {name}!")

-```

-

-You can also use it to document class attributes:

-

-```Python

-from typing import Annotated

-

-from annotated_doc import Doc

-

-class User:

- name: Annotated[str, Doc("The user's name")]

- age: Annotated[int, Doc("The user's age")]

-```

-

-The same way, you could document return types and variables, or anything that could have a type annotation with `Annotated`.

-

-## Who Uses This

-

-`annotated-doc` was made for:

-

-* [FastAPI](https://fastapi.tiangolo.com/)

-* [Typer](https://typer.tiangolo.com/)

-* [SQLModel](https://sqlmodel.tiangolo.com/)

-* [Asyncer](https://asyncer.tiangolo.com/)

-

-`annotated-doc` is supported by [griffe-typingdoc](https://github.com/mkdocstrings/griffe-typingdoc), which powers reference documentation like the one in the [FastAPI Reference](https://fastapi.tiangolo.com/reference/).

-

-## Reasons not to use `annotated-doc`

-

-You are already comfortable with one of the existing docstring formats, like:

-

-* Sphinx

-* numpydoc

-* Google

-* Keras

-

-Your team is already comfortable using them.

-

-You prefer having the documentation about parameters all together in a docstring, separated from the code defining them.

-

-You care about a specific set of users, using one specific editor, and that editor already has support for the specific docstring format you use.

-

-## Reasons to use `annotated-doc`

-

-* No micro-syntax to learn for newcomers, it’s **just Python** syntax.

-* **Editing** would be already fully supported by default by any editor (current or future) supporting Python syntax, including syntax errors, syntax highlighting, etc.

-* **Rendering** would be relatively straightforward to implement by static tools (tools that don't need runtime execution), as the information can be extracted from the AST they normally already create.

-* **Deduplication of information**: the name of a parameter would be defined in a single place, not duplicated inside of a docstring.

-* **Elimination** of the possibility of having **inconsistencies** when removing a parameter or class variable and **forgetting to remove** its documentation.

-* **Minimization** of the probability of adding a new parameter or class variable and **forgetting to add its documentation**.

-* **Elimination** of the possibility of having **inconsistencies** between the **name** of a parameter in the **signature** and the name in the docstring when it is renamed.

-* **Access** to the documentation string for each symbol at **runtime**, including existing (older) Python versions.

-* A more formalized way to document other symbols, like type aliases, that could use Annotated.

-* **Support** for apps using FastAPI, Typer and others.

-* **AI Accessibility**: AI tools will have an easier way understanding each parameter as the distance from documentation to parameter is much closer.

-

-## History

-

-I ([@tiangolo](https://github.com/tiangolo)) originally wanted for this to be part of the Python standard library (in [PEP 727](https://peps.python.org/pep-0727/)), but the proposal was withdrawn as there was a fair amount of negative feedback and opposition.

-

-The conclusion was that this was better done as an external effort, in a third-party library.

-

-So, here it is, with a simpler approach, as a third-party library, in a way that can be used by others, starting with FastAPI and friends.

-

-## License

-

-This project is licensed under the terms of the MIT license.

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/RECORD b/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/RECORD

deleted file mode 100644

index 06bbc8d..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/RECORD

+++ /dev/null

@@ -1,11 +0,0 @@

-annotated_doc-0.0.4.dist-info/INSTALLER,sha256=zuuue4knoyJ-UwPPXg8fezS7VCrXJQrAP7zeNuwvFQg,4

-annotated_doc-0.0.4.dist-info/METADATA,sha256=Irm5KJua33dY2qKKAjJ-OhKaVBVIfwFGej_dSe3Z1TU,6566

-annotated_doc-0.0.4.dist-info/RECORD,,

-annotated_doc-0.0.4.dist-info/WHEEL,sha256=9P2ygRxDrTJz3gsagc0Z96ukrxjr-LFBGOgv3AuKlCA,90

-annotated_doc-0.0.4.dist-info/entry_points.txt,sha256=6OYgBcLyFCUgeqLgnvMyOJxPCWzgy7se4rLPKtNonMs,34

-annotated_doc-0.0.4.dist-info/licenses/LICENSE,sha256=__Fwd5pqy_ZavbQFwIfxzuF4ZpHkqWpANFF-SlBKDN8,1086

-annotated_doc/__init__.py,sha256=VuyxxUe80kfEyWnOrCx_Bk8hybo3aKo6RYBlkBBYW8k,52

-annotated_doc/__pycache__/__init__.cpython-312.pyc,,

-annotated_doc/__pycache__/main.cpython-312.pyc,,

-annotated_doc/main.py,sha256=5Zfvxv80SwwLqpRW73AZyZyiM4bWma9QWRbp_cgD20s,1075

-annotated_doc/py.typed,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/WHEEL b/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/WHEEL

deleted file mode 100644

index 045c8ac..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/WHEEL

+++ /dev/null

@@ -1,4 +0,0 @@

-Wheel-Version: 1.0

-Generator: pdm-backend (2.4.5)

-Root-Is-Purelib: true

-Tag: py3-none-any

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/entry_points.txt b/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/entry_points.txt

deleted file mode 100644

index c3ad472..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/entry_points.txt

+++ /dev/null

@@ -1,4 +0,0 @@

-[console_scripts]

-

-[gui_scripts]

-

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/licenses/LICENSE b/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/licenses/LICENSE

deleted file mode 100644

index 7a25446..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc-0.0.4.dist-info/licenses/LICENSE

+++ /dev/null

@@ -1,21 +0,0 @@

-The MIT License (MIT)

-

-Copyright (c) 2025 Sebastián Ramírez

-

-Permission is hereby granted, free of charge, to any person obtaining a copy

-of this software and associated documentation files (the "Software"), to deal

-in the Software without restriction, including without limitation the rights

-to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

-copies of the Software, and to permit persons to whom the Software is

-furnished to do so, subject to the following conditions:

-

-The above copyright notice and this permission notice shall be included in

-all copies or substantial portions of the Software.

-

-THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

-IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

-FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

-AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

-LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

-OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN

-THE SOFTWARE.

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__init__.py b/backend/.venv/lib/python3.12/site-packages/annotated_doc/__init__.py

deleted file mode 100644

index a0152a7..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__init__.py

+++ /dev/null

@@ -1,3 +0,0 @@

-from .main import Doc as Doc

-

-__version__ = "0.0.4"

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/__init__.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/__init__.cpython-312.pyc

deleted file mode 100644

index acef568..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/__init__.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/main.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/main.cpython-312.pyc

deleted file mode 100644

index 3f27881..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/annotated_doc/__pycache__/main.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc/main.py b/backend/.venv/lib/python3.12/site-packages/annotated_doc/main.py

deleted file mode 100644

index 7063c59..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_doc/main.py

+++ /dev/null

@@ -1,36 +0,0 @@

-class Doc:

- """Define the documentation of a type annotation using `Annotated`, to be

- used in class attributes, function and method parameters, return values,

- and variables.

-

- The value should be a positional-only string literal to allow static tools

- like editors and documentation generators to use it.

-

- This complements docstrings.

-

- The string value passed is available in the attribute `documentation`.

-

- Example:

-

- ```Python

- from typing import Annotated

- from annotated_doc import Doc

-

- def hi(name: Annotated[str, Doc("Who to say hi to")]) -> None:

- print(f"Hi, {name}!")

- ```

- """

-

- def __init__(self, documentation: str, /) -> None:

- self.documentation = documentation

-

- def __repr__(self) -> str:

- return f"Doc({self.documentation!r})"

-

- def __hash__(self) -> int:

- return hash(self.documentation)

-

- def __eq__(self, other: object) -> bool:

- if not isinstance(other, Doc):

- return NotImplemented

- return self.documentation == other.documentation

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_doc/py.typed b/backend/.venv/lib/python3.12/site-packages/annotated_doc/py.typed

deleted file mode 100644

index e69de29..0000000

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/INSTALLER b/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/INSTALLER

deleted file mode 100644

index a1b589e..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/INSTALLER

+++ /dev/null

@@ -1 +0,0 @@

-pip

diff --git a/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/METADATA b/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/METADATA

deleted file mode 100644

index 3ac05cf..0000000

--- a/backend/.venv/lib/python3.12/site-packages/annotated_types-0.7.0.dist-info/METADATA

+++ /dev/null

@@ -1,295 +0,0 @@

-Metadata-Version: 2.3

-Name: annotated-types

-Version: 0.7.0

-Summary: Reusable constraint types to use with typing.Annotated

-Project-URL: Homepage, https://github.com/annotated-types/annotated-types

-Project-URL: Source, https://github.com/annotated-types/annotated-types

-Project-URL: Changelog, https://github.com/annotated-types/annotated-types/releases

-Author-email: Adrian Garcia Badaracco <1755071+adriangb@users.noreply.github.com>, Samuel Colvin - FastAPI framework, high performance, easy to learn, fast to code, ready for production -

- - ---- - -**Documentation**: https://fastapi.tiangolo.com - -**Source Code**: https://github.com/fastapi/fastapi - ---- - -FastAPI is a modern, fast (high-performance), web framework for building APIs with Python based on standard Python type hints. - -The key features are: - -* **Fast**: Very high performance, on par with **NodeJS** and **Go** (thanks to Starlette and Pydantic). [One of the fastest Python frameworks available](#performance). -* **Fast to code**: Increase the speed to develop features by about 200% to 300%. * -* **Fewer bugs**: Reduce about 40% of human (developer) induced errors. * -* **Intuitive**: Great editor support. Completion everywhere. Less time debugging. -* **Easy**: Designed to be easy to use and learn. Less time reading docs. -* **Short**: Minimize code duplication. Multiple features from each parameter declaration. Fewer bugs. -* **Robust**: Get production-ready code. With automatic interactive documentation. -* **Standards-based**: Based on (and fully compatible with) the open standards for APIs: OpenAPI (previously known as Swagger) and JSON Schema. - -* estimation based on tests conducted by an internal development team, building production applications. - -## Sponsors - - -### Keystone Sponsor - - -

-### Gold and Silver Sponsors

-

-

-

-### Gold and Silver Sponsors

-

- -

- -

- -

- -

- -

- -

- -

- -

- -

- -

- -

- -

- -

- -

- -

- -

- -

- -

-

-

-Other sponsors

-

-## Opinions

-

-"_[...] I'm using **FastAPI** a ton these days. [...] I'm actually planning to use it for all of my team's **ML services at Microsoft**. Some of them are getting integrated into the core **Windows** product and some **Office** products._"

-

-

-

-

-

-Other sponsors

-

-## Opinions

-

-"_[...] I'm using **FastAPI** a ton these days. [...] I'm actually planning to use it for all of my team's **ML services at Microsoft**. Some of them are getting integrated into the core **Windows** product and some **Office** products._"

-

-Kabir Khan - Microsoft (ref)

-

----

-

-"_We adopted the **FastAPI** library to spawn a **REST** server that can be queried to obtain **predictions**. [for Ludwig]_"

-

-Piero Molino, Yaroslav Dudin, and Sai Sumanth Miryala - Uber (ref)

-

----

-

-"_**Netflix** is pleased to announce the open-source release of our **crisis management** orchestration framework: **Dispatch**! [built with **FastAPI**]_"

-

-Kevin Glisson, Marc Vilanova, Forest Monsen - Netflix (ref)

-

----

-

-"_I’m over the moon excited about **FastAPI**. It’s so fun!_"

-

-Brian Okken - Python Bytes podcast host (ref)

-

----

-

-"_Honestly, what you've built looks super solid and polished. In many ways, it's what I wanted **Hug** to be - it's really inspiring to see someone build that._"

-

-

-

----

-

-"_If you're looking to learn one **modern framework** for building REST APIs, check out **FastAPI** [...] It's fast, easy to use and easy to learn [...]_"

-

-"_We've switched over to **FastAPI** for our **APIs** [...] I think you'll like it [...]_"

-

-

-

----

-

-"_If anyone is looking to build a production Python API, I would highly recommend **FastAPI**. It is **beautifully designed**, **simple to use** and **highly scalable**, it has become a **key component** in our API first development strategy and is driving many automations and services such as our Virtual TAC Engineer._"

-

-Deon Pillsbury - Cisco (ref)

-

----

-

-## FastAPI mini documentary

-

-There's a FastAPI mini documentary released at the end of 2025, you can watch it online:

-

- -

-## **Typer**, the FastAPI of CLIs

-

-

-

-## **Typer**, the FastAPI of CLIs

-

- -

-If you are building a CLI app to be used in the terminal instead of a web API, check out **Typer**.

-

-**Typer** is FastAPI's little sibling. And it's intended to be the **FastAPI of CLIs**. ⌨️ 🚀

-

-## Requirements

-

-FastAPI stands on the shoulders of giants:

-

-* Starlette for the web parts.

-* Pydantic for the data parts.

-

-## Installation

-

-Create and activate a virtual environment and then install FastAPI:

-

-

-

-If you are building a CLI app to be used in the terminal instead of a web API, check out **Typer**.

-

-**Typer** is FastAPI's little sibling. And it's intended to be the **FastAPI of CLIs**. ⌨️ 🚀

-

-## Requirements

-

-FastAPI stands on the shoulders of giants:

-

-* Starlette for the web parts.

-* Pydantic for the data parts.

-

-## Installation

-

-Create and activate a virtual environment and then install FastAPI:

-

-

-

-```console

-$ pip install "fastapi[standard]"

-

----> 100%

-```

-

-

-

-**Note**: Make sure you put `"fastapi[standard]"` in quotes to ensure it works in all terminals.

-

-## Example

-

-### Create it

-

-Create a file `main.py` with:

-

-```Python

-from typing import Union

-

-from fastapi import FastAPI

-

-app = FastAPI()

-

-

-@app.get("/")

-def read_root():

- return {"Hello": "World"}

-

-

-@app.get("/items/{item_id}")

-def read_item(item_id: int, q: Union[str, None] = None):

- return {"item_id": item_id, "q": q}

-```

-

-

-Or use

-

-If your code uses `async` / `await`, use `async def`:

-

-```Python hl_lines="9 14"

-from typing import Union

-

-from fastapi import FastAPI

-

-app = FastAPI()

-

-

-@app.get("/")

-async def read_root():

- return {"Hello": "World"}

-

-

-@app.get("/items/{item_id}")

-async def read_item(item_id: int, q: Union[str, None] = None):

- return {"item_id": item_id, "q": q}

-```

-

-**Note**:

-

-If you don't know, check the _"In a hurry?"_ section about `async` and `await` in the docs.

-

-

-

-### Run it

-

-Run the server with:

-

-Or use async def...

-

-If your code uses `async` / `await`, use `async def`:

-

-```Python hl_lines="9 14"

-from typing import Union

-

-from fastapi import FastAPI

-

-app = FastAPI()

-

-

-@app.get("/")

-async def read_root():

- return {"Hello": "World"}

-

-

-@app.get("/items/{item_id}")

-async def read_item(item_id: int, q: Union[str, None] = None):

- return {"item_id": item_id, "q": q}

-```

-

-**Note**:

-

-If you don't know, check the _"In a hurry?"_ section about `async` and `await` in the docs.

-

-

-

-```console

-$ fastapi dev main.py

-

- ╭────────── FastAPI CLI - Development mode ───────────╮

- │ │

- │ Serving at: http://127.0.0.1:8000 │

- │ │

- │ API docs: http://127.0.0.1:8000/docs │

- │ │

- │ Running in development mode, for production use: │

- │ │

- │ fastapi run │

- │ │

- ╰─────────────────────────────────────────────────────╯

-

-INFO: Will watch for changes in these directories: ['/home/user/code/awesomeapp']

-INFO: Uvicorn running on http://127.0.0.1:8000 (Press CTRL+C to quit)

-INFO: Started reloader process [2248755] using WatchFiles

-INFO: Started server process [2248757]

-INFO: Waiting for application startup.

-INFO: Application startup complete.

-```

-

-

-

-

-About the command

-

-The command `fastapi dev` reads your `main.py` file, detects the **FastAPI** app in it, and starts a server using Uvicorn.

-

-By default, `fastapi dev` will start with auto-reload enabled for local development.

-

-You can read more about it in the FastAPI CLI docs.

-

-

-

-### Check it

-

-Open your browser at http://127.0.0.1:8000/items/5?q=somequery.

-

-You will see the JSON response as:

-

-```JSON

-{"item_id": 5, "q": "somequery"}

-```

-

-You already created an API that:

-

-* Receives HTTP requests in the _paths_ `/` and `/items/{item_id}`.

-* Both _paths_ take `GET` operations (also known as HTTP _methods_).

-* The _path_ `/items/{item_id}` has a _path parameter_ `item_id` that should be an `int`.

-* The _path_ `/items/{item_id}` has an optional `str` _query parameter_ `q`.

-

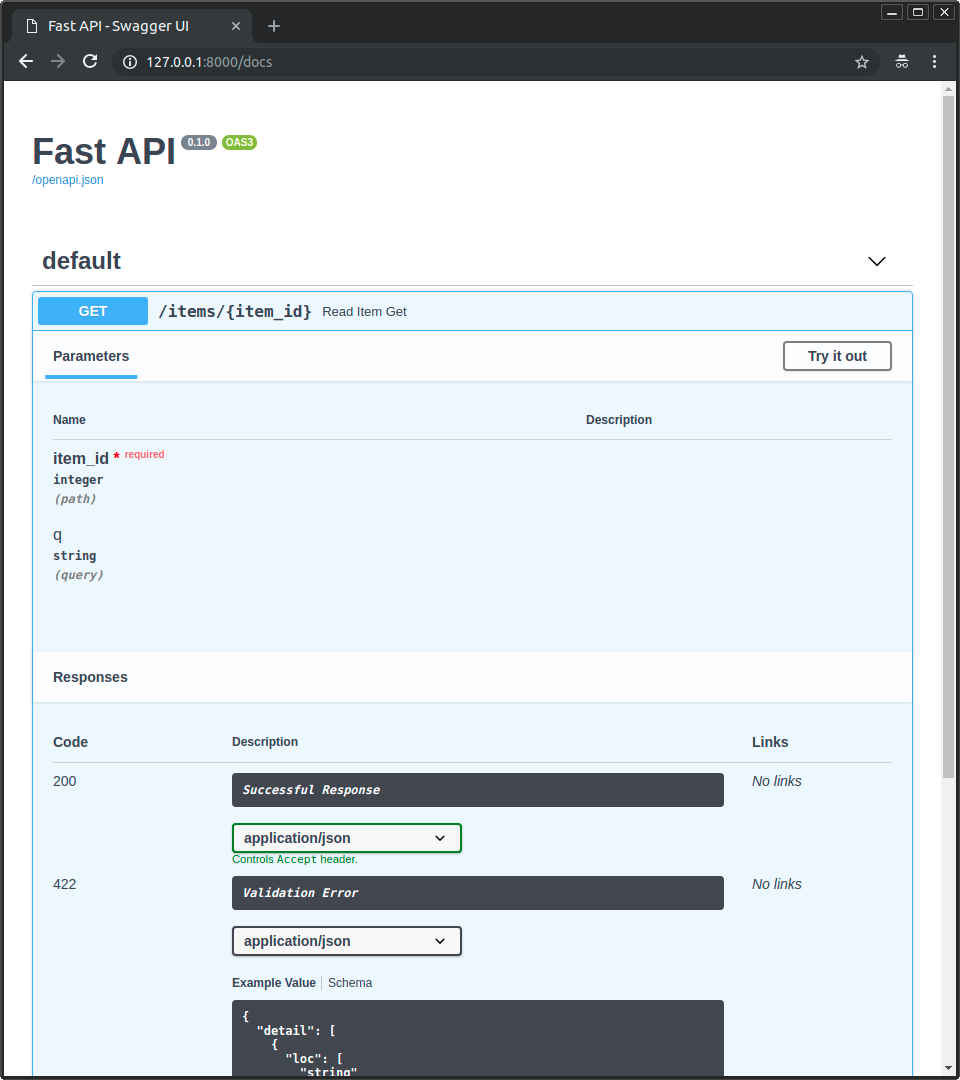

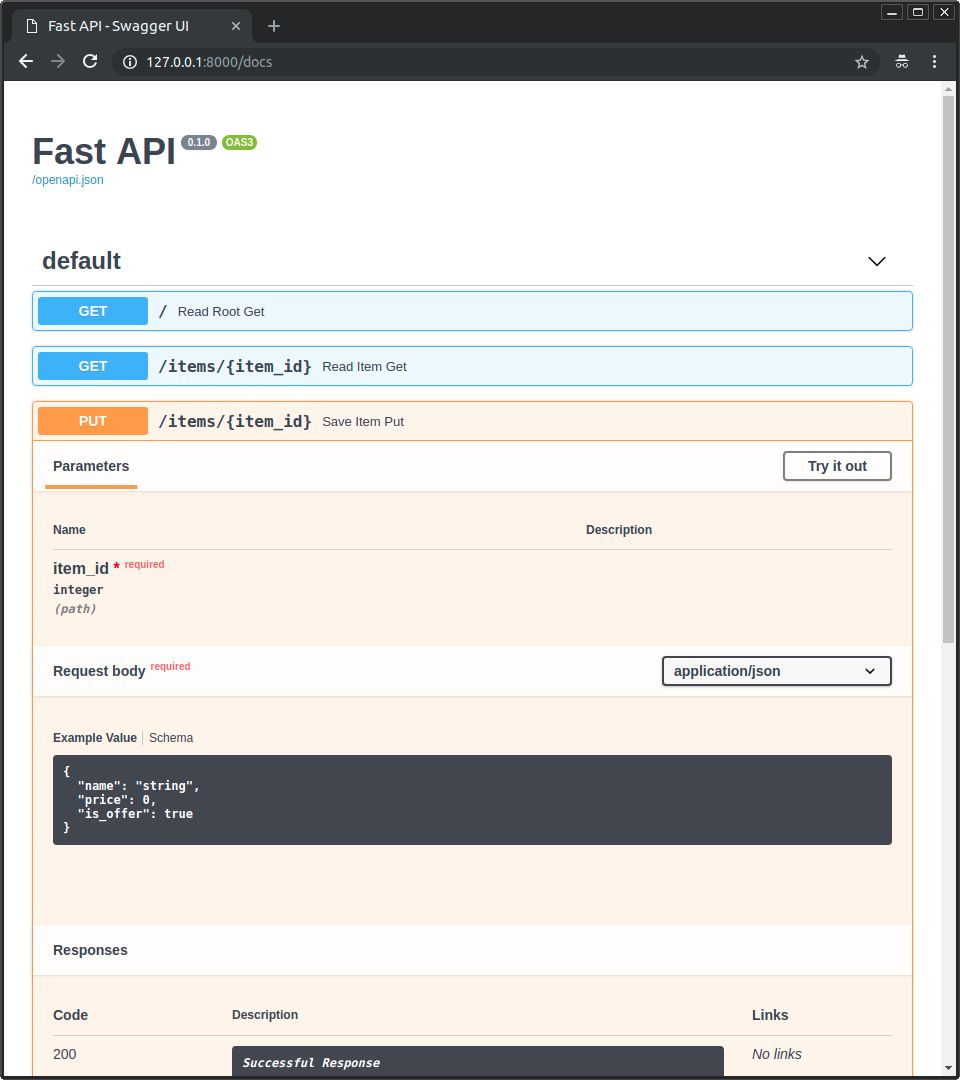

-### Interactive API docs

-

-Now go to http://127.0.0.1:8000/docs.

-

-You will see the automatic interactive API documentation (provided by Swagger UI):

-

-

-

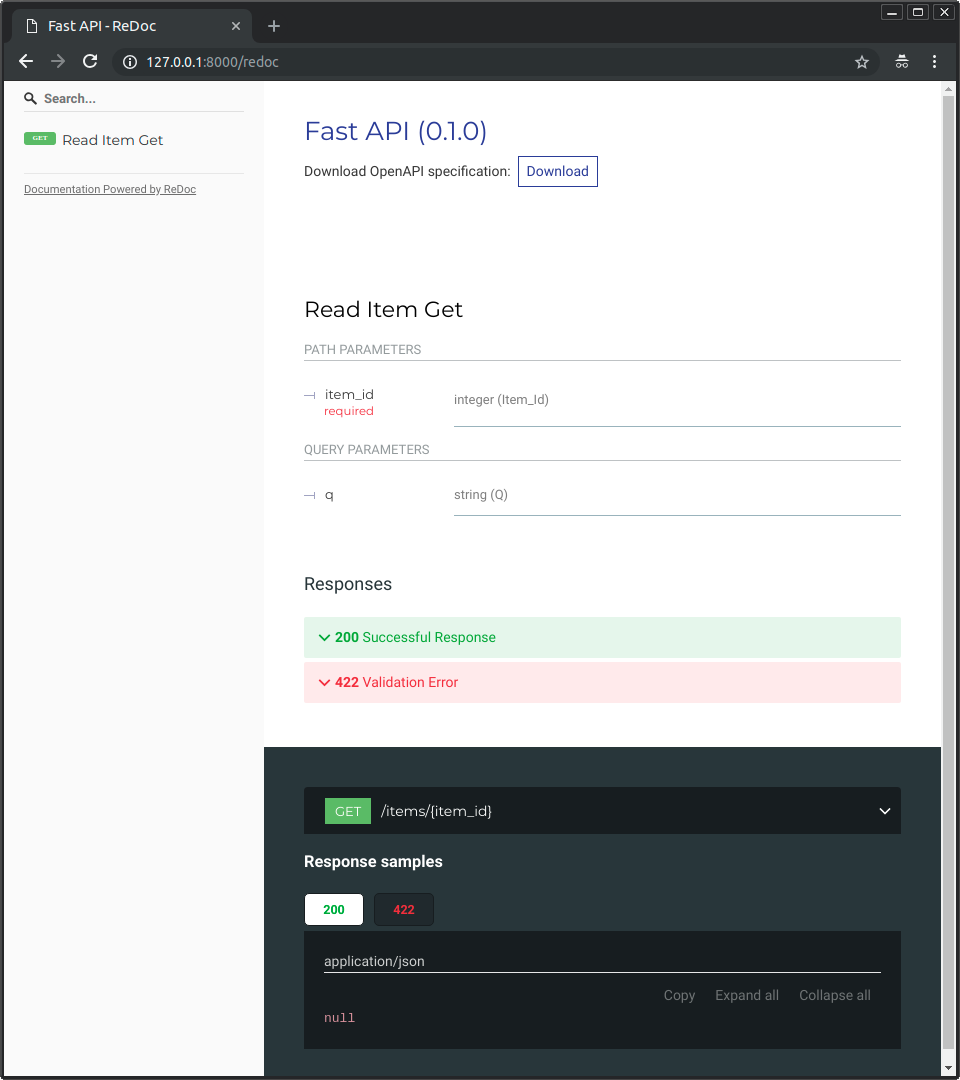

-### Alternative API docs

-

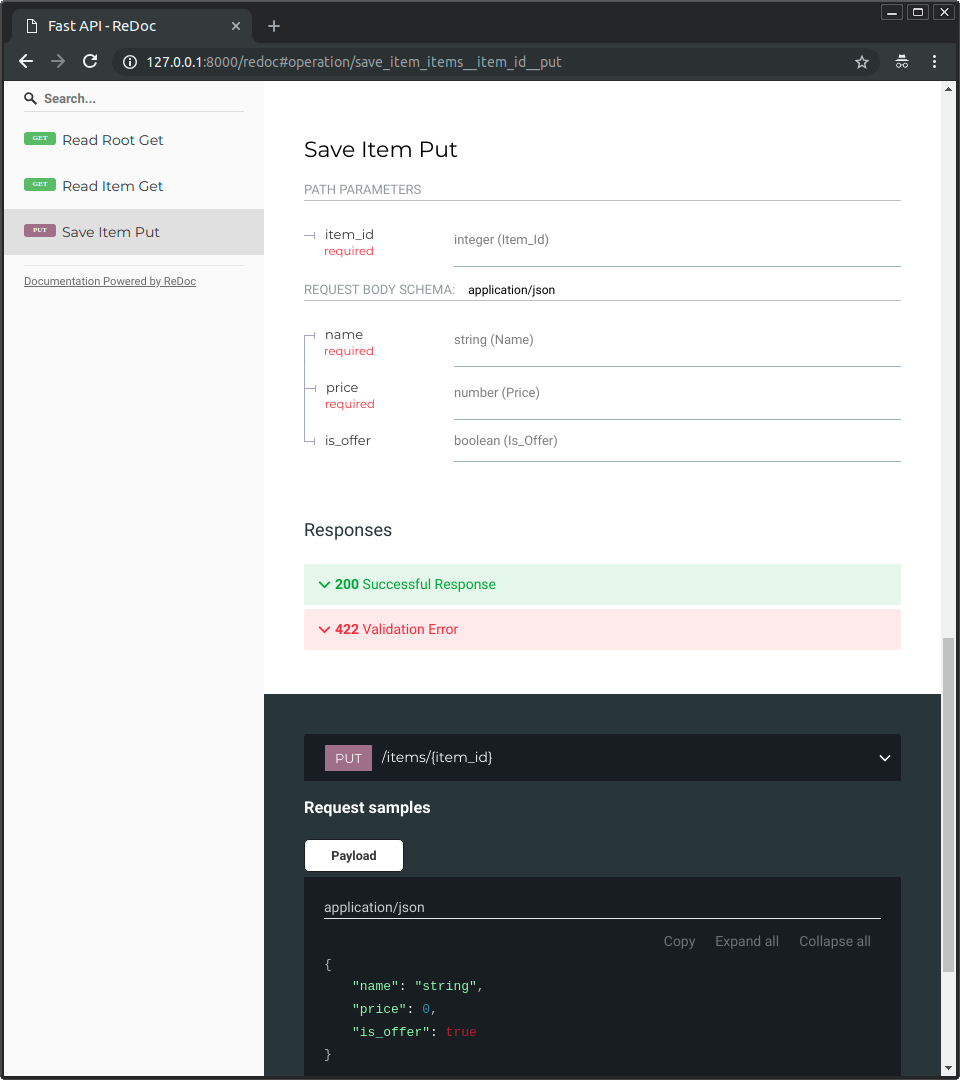

-And now, go to http://127.0.0.1:8000/redoc.

-

-You will see the alternative automatic documentation (provided by ReDoc):

-

-

-

-## Example upgrade

-

-Now modify the file `main.py` to receive a body from a `PUT` request.

-

-Declare the body using standard Python types, thanks to Pydantic.

-

-```Python hl_lines="4 9-12 25-27"

-from typing import Union

-

-from fastapi import FastAPI

-from pydantic import BaseModel

-

-app = FastAPI()

-

-

-class Item(BaseModel):

- name: str

- price: float

- is_offer: Union[bool, None] = None

-

-

-@app.get("/")

-def read_root():

- return {"Hello": "World"}

-

-

-@app.get("/items/{item_id}")

-def read_item(item_id: int, q: Union[str, None] = None):

- return {"item_id": item_id, "q": q}

-

-

-@app.put("/items/{item_id}")

-def update_item(item_id: int, item: Item):

- return {"item_name": item.name, "item_id": item_id}

-```

-

-The `fastapi dev` server should reload automatically.

-

-### Interactive API docs upgrade

-

-Now go to http://127.0.0.1:8000/docs.

-

-* The interactive API documentation will be automatically updated, including the new body:

-

-

-

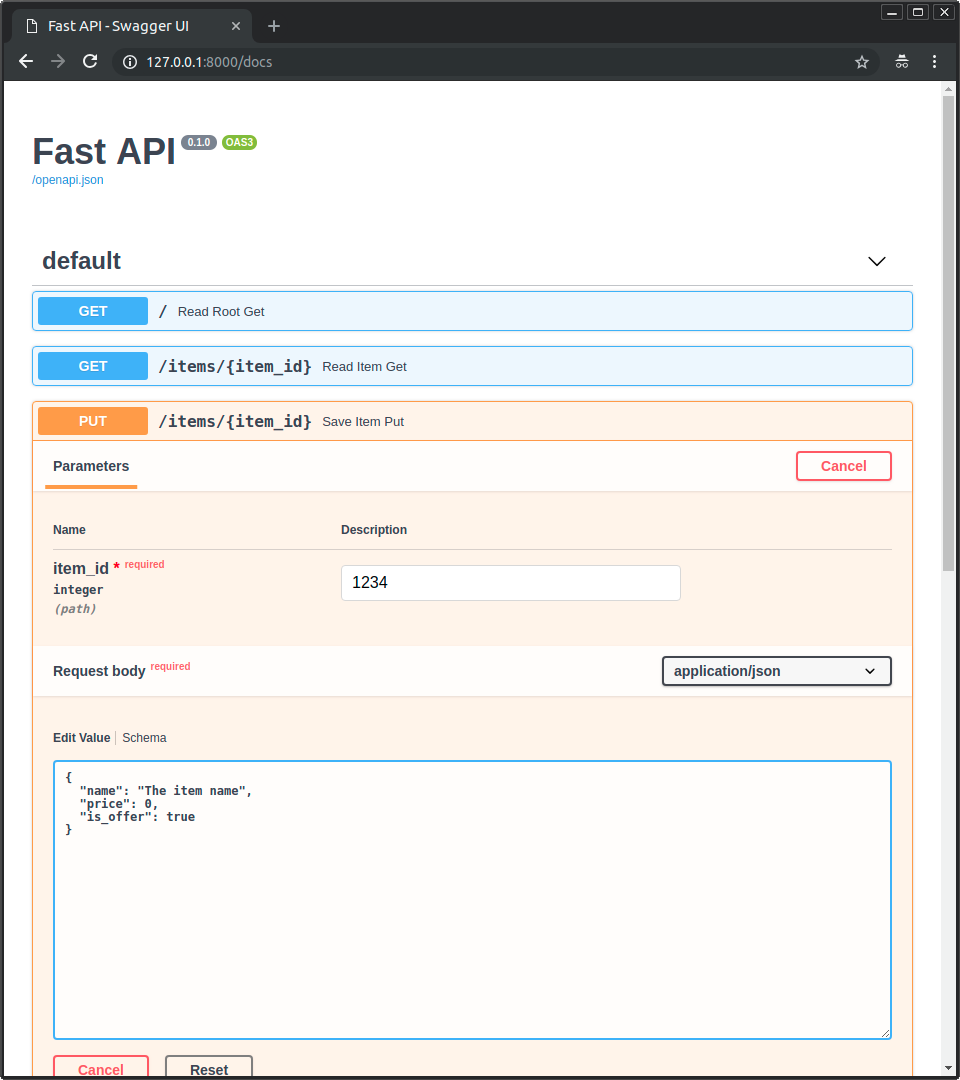

-* Click on the button "Try it out", it allows you to fill the parameters and directly interact with the API:

-

-

-

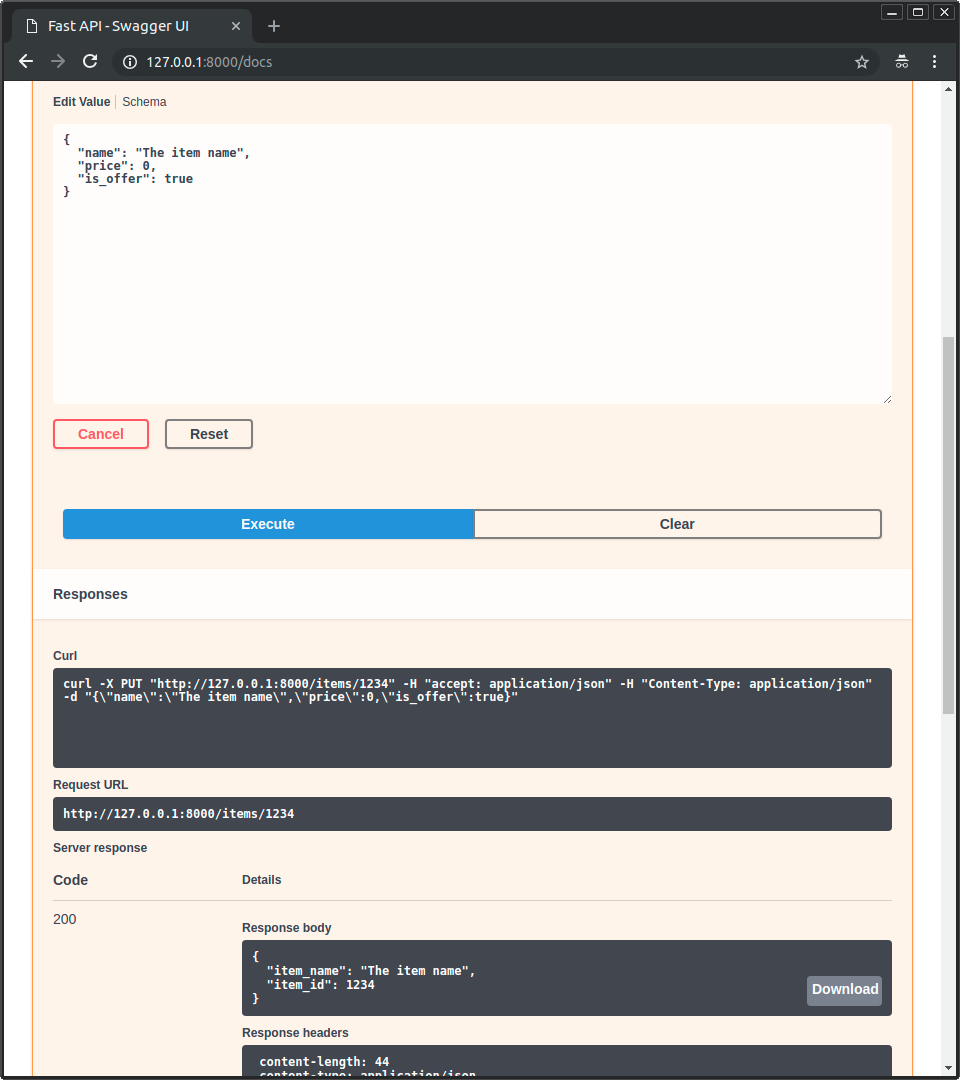

-* Then click on the "Execute" button, the user interface will communicate with your API, send the parameters, get the results and show them on the screen:

-

-

-

-### Alternative API docs upgrade

-

-And now, go to http://127.0.0.1:8000/redoc.

-

-* The alternative documentation will also reflect the new query parameter and body:

-

-

-

-### Recap

-

-In summary, you declare **once** the types of parameters, body, etc. as function parameters.

-

-You do that with standard modern Python types.

-

-You don't have to learn a new syntax, the methods or classes of a specific library, etc.

-

-Just standard **Python**.

-

-For example, for an `int`:

-

-```Python

-item_id: int

-```

-

-or for a more complex `Item` model:

-

-```Python

-item: Item

-```

-

-...and with that single declaration you get:

-

-* Editor support, including:

- * Completion.

- * Type checks.

-* Validation of data:

- * Automatic and clear errors when the data is invalid.

- * Validation even for deeply nested JSON objects.

-* Conversion of input data: coming from the network to Python data and types. Reading from:

- * JSON.

- * Path parameters.

- * Query parameters.

- * Cookies.

- * Headers.

- * Forms.

- * Files.

-* Conversion of output data: converting from Python data and types to network data (as JSON):

- * Convert Python types (`str`, `int`, `float`, `bool`, `list`, etc).

- * `datetime` objects.

- * `UUID` objects.

- * Database models.

- * ...and many more.

-* Automatic interactive API documentation, including 2 alternative user interfaces:

- * Swagger UI.

- * ReDoc.

-

----

-

-Coming back to the previous code example, **FastAPI** will:

-

-* Validate that there is an `item_id` in the path for `GET` and `PUT` requests.

-* Validate that the `item_id` is of type `int` for `GET` and `PUT` requests.

- * If it is not, the client will see a useful, clear error.

-* Check if there is an optional query parameter named `q` (as in `http://127.0.0.1:8000/items/foo?q=somequery`) for `GET` requests.

- * As the `q` parameter is declared with `= None`, it is optional.

- * Without the `None` it would be required (as is the body in the case with `PUT`).

-* For `PUT` requests to `/items/{item_id}`, read the body as JSON:

- * Check that it has a required attribute `name` that should be a `str`.

- * Check that it has a required attribute `price` that has to be a `float`.

- * Check that it has an optional attribute `is_offer`, that should be a `bool`, if present.

- * All this would also work for deeply nested JSON objects.

-* Convert from and to JSON automatically.

-* Document everything with OpenAPI, that can be used by:

- * Interactive documentation systems.

- * Automatic client code generation systems, for many languages.

-* Provide 2 interactive documentation web interfaces directly.

-

----

-

-We just scratched the surface, but you already get the idea of how it all works.

-

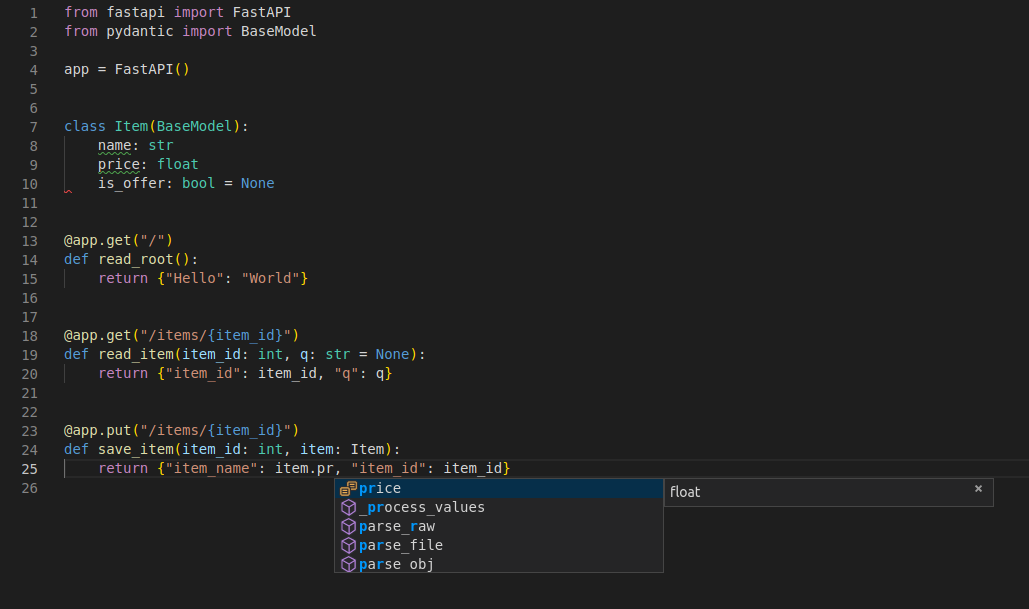

-Try changing the line with:

-

-```Python

- return {"item_name": item.name, "item_id": item_id}

-```

-

-...from:

-

-```Python

- ... "item_name": item.name ...

-```

-

-...to:

-

-```Python

- ... "item_price": item.price ...

-```

-

-...and see how your editor will auto-complete the attributes and know their types:

-

-

-

-For a more complete example including more features, see the Tutorial - User Guide.

-

-**Spoiler alert**: the tutorial - user guide includes:

-

-* Declaration of **parameters** from other different places as: **headers**, **cookies**, **form fields** and **files**.

-* How to set **validation constraints** as `maximum_length` or `regex`.

-* A very powerful and easy to use **Dependency Injection** system.

-* Security and authentication, including support for **OAuth2** with **JWT tokens** and **HTTP Basic** auth.

-* More advanced (but equally easy) techniques for declaring **deeply nested JSON models** (thanks to Pydantic).

-* **GraphQL** integration with Strawberry and other libraries.

-* Many extra features (thanks to Starlette) as:

- * **WebSockets**

- * extremely easy tests based on HTTPX and `pytest`

- * **CORS**

- * **Cookie Sessions**

- * ...and more.

-

-### Deploy your app (optional)

-

-You can optionally deploy your FastAPI app to FastAPI Cloud, go and join the waiting list if you haven't. 🚀

-

-If you already have a **FastAPI Cloud** account (we invited you from the waiting list 😉), you can deploy your application with one command.

-

-Before deploying, make sure you are logged in:

-

-About the command fastapi dev main.py...

-

-The command `fastapi dev` reads your `main.py` file, detects the **FastAPI** app in it, and starts a server using Uvicorn.

-

-By default, `fastapi dev` will start with auto-reload enabled for local development.

-

-You can read more about it in the FastAPI CLI docs.

-

-

-

-```console

-$ fastapi login

-

-You are logged in to FastAPI Cloud 🚀

-```

-

-

-

-Then deploy your app:

-

-

-

-```console

-$ fastapi deploy

-

-Deploying to FastAPI Cloud...

-

-✅ Deployment successful!

-

-🐔 Ready the chicken! Your app is ready at https://myapp.fastapicloud.dev

-```

-

-

-

-That's it! Now you can access your app at that URL. ✨

-

-#### About FastAPI Cloud

-

-**FastAPI Cloud** is built by the same author and team behind **FastAPI**.

-

-It streamlines the process of **building**, **deploying**, and **accessing** an API with minimal effort.

-

-It brings the same **developer experience** of building apps with FastAPI to **deploying** them to the cloud. 🎉

-

-FastAPI Cloud is the primary sponsor and funding provider for the *FastAPI and friends* open source projects. ✨

-

-#### Deploy to other cloud providers

-

-FastAPI is open source and based on standards. You can deploy FastAPI apps to any cloud provider you choose.

-

-Follow your cloud provider's guides to deploy FastAPI apps with them. 🤓

-

-## Performance

-

-Independent TechEmpower benchmarks show **FastAPI** applications running under Uvicorn as one of the fastest Python frameworks available, only below Starlette and Uvicorn themselves (used internally by FastAPI). (*)

-

-To understand more about it, see the section Benchmarks.

-

-## Dependencies

-

-FastAPI depends on Pydantic and Starlette.

-

-### `standard` Dependencies

-

-When you install FastAPI with `pip install "fastapi[standard]"` it comes with the `standard` group of optional dependencies:

-

-Used by Pydantic:

-

-* email-validator - for email validation.

-

-Used by Starlette:

-

-* httpx - Required if you want to use the `TestClient`.

-* jinja2 - Required if you want to use the default template configuration.

-* python-multipart - Required if you want to support form "parsing", with `request.form()`.

-

-Used by FastAPI:

-

-* uvicorn - for the server that loads and serves your application. This includes `uvicorn[standard]`, which includes some dependencies (e.g. `uvloop`) needed for high performance serving.

-* `fastapi-cli[standard]` - to provide the `fastapi` command.

- * This includes `fastapi-cloud-cli`, which allows you to deploy your FastAPI application to FastAPI Cloud.

-

-### Without `standard` Dependencies

-

-If you don't want to include the `standard` optional dependencies, you can install with `pip install fastapi` instead of `pip install "fastapi[standard]"`.

-

-### Without `fastapi-cloud-cli`

-

-If you want to install FastAPI with the standard dependencies but without the `fastapi-cloud-cli`, you can install with `pip install "fastapi[standard-no-fastapi-cloud-cli]"`.

-

-### Additional Optional Dependencies

-

-There are some additional dependencies you might want to install.

-

-Additional optional Pydantic dependencies:

-

-* pydantic-settings - for settings management.

-* pydantic-extra-types - for extra types to be used with Pydantic.

-

-Additional optional FastAPI dependencies:

-

-* orjson - Required if you want to use `ORJSONResponse`.

-* ujson - Required if you want to use `UJSONResponse`.

-

-## License

-

-This project is licensed under the terms of the MIT license.

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/RECORD b/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/RECORD

deleted file mode 100644

index 372e3e4..0000000

--- a/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/RECORD

+++ /dev/null

@@ -1,103 +0,0 @@

-../../../bin/fastapi,sha256=YoJmb9ZCq_mki6xo8vzXXMZGMucbJZsUkvK0Sh_J-KE,213

-fastapi-0.128.0.dist-info/INSTALLER,sha256=zuuue4knoyJ-UwPPXg8fezS7VCrXJQrAP7zeNuwvFQg,4

-fastapi-0.128.0.dist-info/METADATA,sha256=hKL7LtKoBl4ojHSJ_u5MsihJRMPBU5AsppXt75m24FY,30977

-fastapi-0.128.0.dist-info/RECORD,,

-fastapi-0.128.0.dist-info/REQUESTED,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

-fastapi-0.128.0.dist-info/WHEEL,sha256=tsUv_t7BDeJeRHaSrczbGeuK-TtDpGsWi_JfpzD255I,90

-fastapi-0.128.0.dist-info/entry_points.txt,sha256=GCf-WbIZxyGT4MUmrPGj1cOHYZoGsNPHAvNkT6hnGeA,61

-fastapi-0.128.0.dist-info/licenses/LICENSE,sha256=Tsif_IFIW5f-xYSy1KlhAy7v_oNEU4lP2cEnSQbMdE4,1086

-fastapi/__init__.py,sha256=wlYVfo3p5m4F8JxyVpV1aFS5XKRzgCXBfB9s231GveY,1081

-fastapi/__main__.py,sha256=bKePXLdO4SsVSM6r9SVoLickJDcR2c0cTOxZRKq26YQ,37

-fastapi/__pycache__/__init__.cpython-312.pyc,,

-fastapi/__pycache__/__main__.cpython-312.pyc,,

-fastapi/__pycache__/applications.cpython-312.pyc,,

-fastapi/__pycache__/background.cpython-312.pyc,,

-fastapi/__pycache__/cli.cpython-312.pyc,,

-fastapi/__pycache__/concurrency.cpython-312.pyc,,

-fastapi/__pycache__/datastructures.cpython-312.pyc,,

-fastapi/__pycache__/encoders.cpython-312.pyc,,

-fastapi/__pycache__/exception_handlers.cpython-312.pyc,,

-fastapi/__pycache__/exceptions.cpython-312.pyc,,

-fastapi/__pycache__/logger.cpython-312.pyc,,

-fastapi/__pycache__/param_functions.cpython-312.pyc,,

-fastapi/__pycache__/params.cpython-312.pyc,,

-fastapi/__pycache__/requests.cpython-312.pyc,,

-fastapi/__pycache__/responses.cpython-312.pyc,,

-fastapi/__pycache__/routing.cpython-312.pyc,,

-fastapi/__pycache__/staticfiles.cpython-312.pyc,,

-fastapi/__pycache__/templating.cpython-312.pyc,,

-fastapi/__pycache__/testclient.cpython-312.pyc,,

-fastapi/__pycache__/types.cpython-312.pyc,,

-fastapi/__pycache__/utils.cpython-312.pyc,,

-fastapi/__pycache__/websockets.cpython-312.pyc,,

-fastapi/_compat/__init__.py,sha256=o3dg67W5LlwA52_1Y9Re_JhelcG0oj5ke_GdQHcwBnw,2226

-fastapi/_compat/__pycache__/__init__.cpython-312.pyc,,

-fastapi/_compat/__pycache__/shared.cpython-312.pyc,,

-fastapi/_compat/__pycache__/v2.cpython-312.pyc,,

-fastapi/_compat/shared.py,sha256=yFZWOnzG1JRIPuLOk0eaBcrwP2bap8UkP5I0XBuVZck,6842

-fastapi/_compat/v2.py,sha256=B5OawqkcpTaaDLNZDHFlasGQyYFS7VFbwyDVwUTydE0,19597

-fastapi/applications.py,sha256=IO5F5FdRacBFXYxGPk7zPbwRCa-cxN74HHf0eMEp7xE,180536

-fastapi/background.py,sha256=fDNVXWBZniIQIxW3v-Sc99FT2p4RDKOOWW2fhOe4Nko,1793

-fastapi/cli.py,sha256=OYhZb0NR_deuT5ofyPF2NoNBzZDNOP8Salef2nk-HqA,418

-fastapi/concurrency.py,sha256=xHGDEOQAA6cvFEDX46oq3r2t1Zd4sVvreaRgdIE4juM,1489

-fastapi/datastructures.py,sha256=41qs2ZhTzORMGn7JSAF9qsiPY9XP4uGyGOMKhfzg4i4,5205

-fastapi/dependencies/__init__.py,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

-fastapi/dependencies/__pycache__/__init__.cpython-312.pyc,,

-fastapi/dependencies/__pycache__/models.cpython-312.pyc,,

-fastapi/dependencies/__pycache__/utils.cpython-312.pyc,,

-fastapi/dependencies/models.py,sha256=TjJB2l6m-vhFkau7ysLdgcymZ6SdIJmlrJjqMJs5TZc,7317

-fastapi/dependencies/utils.py,sha256=rJQFCFUC7q765yUcOoSV78GCyTGfS2ePLbMvJ2B-yLc,38549

-fastapi/encoders.py,sha256=uQfXjliV2O93wy7sng3pUuLZw9UOw8HNsAHb2B1ZfEs,11004

-fastapi/exception_handlers.py,sha256=YVcT8Zy021VYYeecgdyh5YEUjEIHKcLspbkSf4OfbJI,1275

-fastapi/exceptions.py,sha256=enNT5h_wDyzY90qA4a_VqMDRSUNHTd1LX_vihMNa-LE,6973

-fastapi/logger.py,sha256=I9NNi3ov8AcqbsbC9wl1X-hdItKgYt2XTrx1f99Zpl4,54

-fastapi/middleware/__init__.py,sha256=oQDxiFVcc1fYJUOIFvphnK7pTT5kktmfL32QXpBFvvo,58

-fastapi/middleware/__pycache__/__init__.cpython-312.pyc,,

-fastapi/middleware/__pycache__/asyncexitstack.cpython-312.pyc,,

-fastapi/middleware/__pycache__/cors.cpython-312.pyc,,

-fastapi/middleware/__pycache__/gzip.cpython-312.pyc,,

-fastapi/middleware/__pycache__/httpsredirect.cpython-312.pyc,,

-fastapi/middleware/__pycache__/trustedhost.cpython-312.pyc,,

-fastapi/middleware/__pycache__/wsgi.cpython-312.pyc,,

-fastapi/middleware/asyncexitstack.py,sha256=RKGlQpGzg3GLosqVhrxBy_NCZ9qJS7zQeNHt5Y3x-00,637

-fastapi/middleware/cors.py,sha256=ynwjWQZoc_vbhzZ3_ZXceoaSrslHFHPdoM52rXr0WUU,79

-fastapi/middleware/gzip.py,sha256=xM5PcsH8QlAimZw4VDvcmTnqQamslThsfe3CVN2voa0,79

-fastapi/middleware/httpsredirect.py,sha256=rL8eXMnmLijwVkH7_400zHri1AekfeBd6D6qs8ix950,115

-fastapi/middleware/trustedhost.py,sha256=eE5XGRxGa7c5zPnMJDGp3BxaL25k5iVQlhnv-Pk0Pss,109

-fastapi/middleware/wsgi.py,sha256=Z3Ue-7wni4lUZMvH3G9ek__acgYdJstbnpZX_HQAboY,79

-fastapi/openapi/__init__.py,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

-fastapi/openapi/__pycache__/__init__.cpython-312.pyc,,

-fastapi/openapi/__pycache__/constants.cpython-312.pyc,,

-fastapi/openapi/__pycache__/docs.cpython-312.pyc,,

-fastapi/openapi/__pycache__/models.cpython-312.pyc,,

-fastapi/openapi/__pycache__/utils.cpython-312.pyc,,

-fastapi/openapi/constants.py,sha256=adGzmis1L1HJRTE3kJ5fmHS_Noq6tIY6pWv_SFzoFDU,153

-fastapi/openapi/docs.py,sha256=wqcXZOhBdnf2pilVNyAIfjKAhfH9MXaQiitwZeJCR7I,10335

-fastapi/openapi/models.py,sha256=xJfPRE7DqNvtqgdouXbtMCCLBrZ-4Bd87QaA_WPUVTA,15419

-fastapi/openapi/utils.py,sha256=HsOqZ8uWSTUYL18jhYI0gU-A1nLHCNu3k3UEIAVzRyY,23795

-fastapi/param_functions.py,sha256=O8bsr2xM8XODE0wjtev8sZLl3Tt_glpdQteM2relVrU,64466

-fastapi/params.py,sha256=YS7z57t0N4H8Rdogx1sU6R-KZDcLn5y46SGz8z5lX-s,26982

-fastapi/py.typed,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

-fastapi/requests.py,sha256=zayepKFcienBllv3snmWI20Gk0oHNVLU4DDhqXBb4LU,142

-fastapi/responses.py,sha256=QNQQlwpKhQoIPZTTWkpc9d_QGeGZ_aVQPaDV3nQ8m7c,1761

-fastapi/routing.py,sha256=dHZW6NLzbvD7jZT2N-V6fj1Irbe8HR-FDeiBNSuTHHs,178746

-fastapi/security/__init__.py,sha256=bO8pNmxqVRXUjfl2mOKiVZLn0FpBQ61VUYVjmppnbJw,881

-fastapi/security/__pycache__/__init__.cpython-312.pyc,,

-fastapi/security/__pycache__/api_key.cpython-312.pyc,,

-fastapi/security/__pycache__/base.cpython-312.pyc,,

-fastapi/security/__pycache__/http.cpython-312.pyc,,

-fastapi/security/__pycache__/oauth2.cpython-312.pyc,,

-fastapi/security/__pycache__/open_id_connect_url.cpython-312.pyc,,

-fastapi/security/__pycache__/utils.cpython-312.pyc,,

-fastapi/security/api_key.py,sha256=5AriUhrA_KgdtJRJ_BtCDgcTFOUlUUvDSultdIfdApc,9799

-fastapi/security/base.py,sha256=dl4pvbC-RxjfbWgPtCWd8MVU-7CB2SZ22rJDXVCXO6c,141

-fastapi/security/http.py,sha256=gckOhSa1ubLpARU819pxKiZZnmnyg_co6AwQyNE8yxw,13518

-fastapi/security/oauth2.py,sha256=8sU0yRncO_1mK8rdUES1GRijPawi2ZGwLGWphWeS02w,22477

-fastapi/security/open_id_connect_url.py,sha256=pFvSVESThhjYXSDWPlFGtm9bN62JXHzuwnVfmtyNcZE,3158

-fastapi/security/utils.py,sha256=Gk6KGztJnYqvYFTmuQO7ow_icayiqP3HL762ZFRQjfU,286

-fastapi/staticfiles.py,sha256=iirGIt3sdY2QZXd36ijs3Cj-T0FuGFda3cd90kM9Ikw,69

-fastapi/templating.py,sha256=4zsuTWgcjcEainMJFAlW6-gnslm6AgOS1SiiDWfmQxk,76

-fastapi/testclient.py,sha256=nBvaAmX66YldReJNZXPOk1sfuo2Q6hs8bOvIaCep6LQ,66

-fastapi/types.py,sha256=W0HOmfeZw_3PcMDOa6GA-Or9okP9hf_260UkbCfKHY4,455

-fastapi/utils.py,sha256=sl-ddHjWbQiLUNLFiG93IXStAZgp8NSFwJsjo41mb04,5230

-fastapi/websockets.py,sha256=419uncYObEKZG0YcrXscfQQYLSWoE10jqxVMetGdR98,222

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/REQUESTED b/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/REQUESTED

deleted file mode 100644

index e69de29..0000000

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/WHEEL b/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/WHEEL

deleted file mode 100644

index 2efd4ed..0000000

--- a/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/WHEEL

+++ /dev/null

@@ -1,4 +0,0 @@

-Wheel-Version: 1.0

-Generator: pdm-backend (2.4.6)

-Root-Is-Purelib: true

-Tag: py3-none-any

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/entry_points.txt b/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/entry_points.txt

deleted file mode 100644

index b81849e..0000000

--- a/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/entry_points.txt

+++ /dev/null

@@ -1,5 +0,0 @@

-[console_scripts]

-fastapi = fastapi.cli:main

-

-[gui_scripts]

-

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/licenses/LICENSE b/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/licenses/LICENSE

deleted file mode 100644

index 3e92463..0000000

--- a/backend/.venv/lib/python3.12/site-packages/fastapi-0.128.0.dist-info/licenses/LICENSE

+++ /dev/null

@@ -1,21 +0,0 @@

-The MIT License (MIT)

-

-Copyright (c) 2018 Sebastián Ramírez

-

-Permission is hereby granted, free of charge, to any person obtaining a copy

-of this software and associated documentation files (the "Software"), to deal

-in the Software without restriction, including without limitation the rights

-to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

-copies of the Software, and to permit persons to whom the Software is

-furnished to do so, subject to the following conditions:

-

-The above copyright notice and this permission notice shall be included in

-all copies or substantial portions of the Software.

-

-THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

-IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

-FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

-AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

-LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

-OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN

-THE SOFTWARE.

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__init__.py b/backend/.venv/lib/python3.12/site-packages/fastapi/__init__.py

deleted file mode 100644

index 6133787..0000000

--- a/backend/.venv/lib/python3.12/site-packages/fastapi/__init__.py

+++ /dev/null

@@ -1,25 +0,0 @@

-"""FastAPI framework, high performance, easy to learn, fast to code, ready for production"""

-

-__version__ = "0.128.0"

-

-from starlette import status as status

-

-from .applications import FastAPI as FastAPI

-from .background import BackgroundTasks as BackgroundTasks

-from .datastructures import UploadFile as UploadFile

-from .exceptions import HTTPException as HTTPException

-from .exceptions import WebSocketException as WebSocketException

-from .param_functions import Body as Body

-from .param_functions import Cookie as Cookie

-from .param_functions import Depends as Depends

-from .param_functions import File as File

-from .param_functions import Form as Form

-from .param_functions import Header as Header

-from .param_functions import Path as Path

-from .param_functions import Query as Query

-from .param_functions import Security as Security

-from .requests import Request as Request

-from .responses import Response as Response

-from .routing import APIRouter as APIRouter

-from .websockets import WebSocket as WebSocket

-from .websockets import WebSocketDisconnect as WebSocketDisconnect

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__main__.py b/backend/.venv/lib/python3.12/site-packages/fastapi/__main__.py

deleted file mode 100644

index fc36465..0000000

--- a/backend/.venv/lib/python3.12/site-packages/fastapi/__main__.py

+++ /dev/null

@@ -1,3 +0,0 @@

-from fastapi.cli import main

-

-main()

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/__init__.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/__init__.cpython-312.pyc

deleted file mode 100644

index 8321df2..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/__init__.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/__main__.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/__main__.cpython-312.pyc

deleted file mode 100644

index 3ae7c07..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/__main__.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/applications.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/applications.cpython-312.pyc

deleted file mode 100644

index d2664be..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/applications.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/background.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/background.cpython-312.pyc

deleted file mode 100644

index e0081cf..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/background.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/cli.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/cli.cpython-312.pyc

deleted file mode 100644

index 4a99f9f..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/cli.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/concurrency.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/concurrency.cpython-312.pyc

deleted file mode 100644

index fa3f4da..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/concurrency.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/datastructures.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/datastructures.cpython-312.pyc

deleted file mode 100644

index 4b89595..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/datastructures.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/encoders.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/encoders.cpython-312.pyc

deleted file mode 100644

index 9d02f78..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/encoders.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/exception_handlers.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/exception_handlers.cpython-312.pyc

deleted file mode 100644

index 0d7ab64..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/exception_handlers.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/exceptions.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/exceptions.cpython-312.pyc

deleted file mode 100644

index ebd0482..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/exceptions.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/logger.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/logger.cpython-312.pyc

deleted file mode 100644

index 175467f..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/logger.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/param_functions.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/param_functions.cpython-312.pyc

deleted file mode 100644

index e1dd42f..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/param_functions.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/params.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/params.cpython-312.pyc

deleted file mode 100644

index 744780c..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/params.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/requests.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/requests.cpython-312.pyc

deleted file mode 100644

index a5f016d..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/requests.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/responses.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/responses.cpython-312.pyc

deleted file mode 100644

index e4db996..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/responses.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/routing.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/routing.cpython-312.pyc

deleted file mode 100644

index af52ea5..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/routing.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/staticfiles.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/staticfiles.cpython-312.pyc

deleted file mode 100644

index bad5945..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/staticfiles.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/templating.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/templating.cpython-312.pyc

deleted file mode 100644

index c3dbeb0..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/templating.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/testclient.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/testclient.cpython-312.pyc

deleted file mode 100644

index 36c23cf..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/testclient.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/types.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/types.cpython-312.pyc

deleted file mode 100644

index 88878ab..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/types.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/utils.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/utils.cpython-312.pyc

deleted file mode 100644

index 06a0c9d..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/utils.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/websockets.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/websockets.cpython-312.pyc

deleted file mode 100644

index d3544ea..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/__pycache__/websockets.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__init__.py b/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__init__.py

deleted file mode 100644

index 3dfaf9b..0000000

--- a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__init__.py

+++ /dev/null

@@ -1,41 +0,0 @@

-from .shared import PYDANTIC_V2 as PYDANTIC_V2

-from .shared import PYDANTIC_VERSION_MINOR_TUPLE as PYDANTIC_VERSION_MINOR_TUPLE

-from .shared import annotation_is_pydantic_v1 as annotation_is_pydantic_v1

-from .shared import field_annotation_is_scalar as field_annotation_is_scalar

-from .shared import is_pydantic_v1_model_class as is_pydantic_v1_model_class

-from .shared import is_pydantic_v1_model_instance as is_pydantic_v1_model_instance

-from .shared import (

- is_uploadfile_or_nonable_uploadfile_annotation as is_uploadfile_or_nonable_uploadfile_annotation,

-)

-from .shared import (

- is_uploadfile_sequence_annotation as is_uploadfile_sequence_annotation,

-)

-from .shared import lenient_issubclass as lenient_issubclass

-from .shared import sequence_types as sequence_types

-from .shared import value_is_sequence as value_is_sequence

-from .v2 import BaseConfig as BaseConfig

-from .v2 import ModelField as ModelField

-from .v2 import PydanticSchemaGenerationError as PydanticSchemaGenerationError

-from .v2 import RequiredParam as RequiredParam

-from .v2 import Undefined as Undefined

-from .v2 import UndefinedType as UndefinedType

-from .v2 import Url as Url

-from .v2 import Validator as Validator

-from .v2 import _regenerate_error_with_loc as _regenerate_error_with_loc

-from .v2 import copy_field_info as copy_field_info

-from .v2 import create_body_model as create_body_model

-from .v2 import evaluate_forwardref as evaluate_forwardref

-from .v2 import get_cached_model_fields as get_cached_model_fields

-from .v2 import get_compat_model_name_map as get_compat_model_name_map

-from .v2 import get_definitions as get_definitions

-from .v2 import get_missing_field_error as get_missing_field_error

-from .v2 import get_schema_from_model_field as get_schema_from_model_field

-from .v2 import is_bytes_field as is_bytes_field

-from .v2 import is_bytes_sequence_field as is_bytes_sequence_field

-from .v2 import is_scalar_field as is_scalar_field

-from .v2 import is_scalar_sequence_field as is_scalar_sequence_field

-from .v2 import is_sequence_field as is_sequence_field

-from .v2 import serialize_sequence_value as serialize_sequence_value

-from .v2 import (

- with_info_plain_validator_function as with_info_plain_validator_function,

-)

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__pycache__/__init__.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__pycache__/__init__.cpython-312.pyc

deleted file mode 100644

index c7137c3..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__pycache__/__init__.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__pycache__/shared.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__pycache__/shared.cpython-312.pyc

deleted file mode 100644

index 6b9fac3..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__pycache__/shared.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__pycache__/v2.cpython-312.pyc b/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__pycache__/v2.cpython-312.pyc

deleted file mode 100644

index 638586c..0000000

Binary files a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/__pycache__/v2.cpython-312.pyc and /dev/null differ

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/shared.py b/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/shared.py

deleted file mode 100644

index 419b58f..0000000

--- a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/shared.py

+++ /dev/null

@@ -1,206 +0,0 @@

-import sys

-import types

-import typing

-import warnings

-from collections import deque

-from collections.abc import Mapping, Sequence

-from dataclasses import is_dataclass

-from typing import (

- Annotated,

- Any,

- Union,

-)

-

-from fastapi.types import UnionType

-from pydantic import BaseModel

-from pydantic.version import VERSION as PYDANTIC_VERSION

-from starlette.datastructures import UploadFile

-from typing_extensions import get_args, get_origin

-

-# Copy from Pydantic v2, compatible with v1

-if sys.version_info < (3, 10):

- WithArgsTypes: tuple[Any, ...] = (typing._GenericAlias, types.GenericAlias) # type: ignore[attr-defined]

-else:

- WithArgsTypes: tuple[Any, ...] = (

- typing._GenericAlias, # type: ignore[attr-defined]

- types.GenericAlias,

- types.UnionType,

- ) # pyright: ignore[reportAttributeAccessIssue]

-

-PYDANTIC_VERSION_MINOR_TUPLE = tuple(int(x) for x in PYDANTIC_VERSION.split(".")[:2])

-PYDANTIC_V2 = PYDANTIC_VERSION_MINOR_TUPLE[0] == 2

-

-

-sequence_annotation_to_type = {

- Sequence: list,

- list: list,

- tuple: tuple,

- set: set,

- frozenset: frozenset,

- deque: deque,

-}

-

-sequence_types = tuple(sequence_annotation_to_type.keys())

-

-Url: type[Any]

-

-

-# Copy of Pydantic v2, compatible with v1

-def lenient_issubclass(

- cls: Any, class_or_tuple: Union[type[Any], tuple[type[Any], ...], None]

-) -> bool:

- try:

- return isinstance(cls, type) and issubclass(cls, class_or_tuple) # type: ignore[arg-type]

- except TypeError: # pragma: no cover

- if isinstance(cls, WithArgsTypes):

- return False

- raise # pragma: no cover

-

-

-def _annotation_is_sequence(annotation: Union[type[Any], None]) -> bool:

- if lenient_issubclass(annotation, (str, bytes)):

- return False

- return lenient_issubclass(annotation, sequence_types)

-

-

-def field_annotation_is_sequence(annotation: Union[type[Any], None]) -> bool:

- origin = get_origin(annotation)

- if origin is Union or origin is UnionType:

- for arg in get_args(annotation):

- if field_annotation_is_sequence(arg):

- return True

- return False

- return _annotation_is_sequence(annotation) or _annotation_is_sequence(

- get_origin(annotation)

- )

-

-

-def value_is_sequence(value: Any) -> bool:

- return isinstance(value, sequence_types) and not isinstance(value, (str, bytes))

-

-

-def _annotation_is_complex(annotation: Union[type[Any], None]) -> bool:

- return (

- lenient_issubclass(annotation, (BaseModel, Mapping, UploadFile))

- or _annotation_is_sequence(annotation)

- or is_dataclass(annotation)

- )

-

-

-def field_annotation_is_complex(annotation: Union[type[Any], None]) -> bool:

- origin = get_origin(annotation)

- if origin is Union or origin is UnionType:

- return any(field_annotation_is_complex(arg) for arg in get_args(annotation))

-

- if origin is Annotated:

- return field_annotation_is_complex(get_args(annotation)[0])

-

- return (

- _annotation_is_complex(annotation)

- or _annotation_is_complex(origin)

- or hasattr(origin, "__pydantic_core_schema__")

- or hasattr(origin, "__get_pydantic_core_schema__")

- )

-

-

-def field_annotation_is_scalar(annotation: Any) -> bool:

- # handle Ellipsis here to make tuple[int, ...] work nicely

- return annotation is Ellipsis or not field_annotation_is_complex(annotation)

-

-

-def field_annotation_is_scalar_sequence(annotation: Union[type[Any], None]) -> bool:

- origin = get_origin(annotation)

- if origin is Union or origin is UnionType:

- at_least_one_scalar_sequence = False

- for arg in get_args(annotation):

- if field_annotation_is_scalar_sequence(arg):

- at_least_one_scalar_sequence = True

- continue

- elif not field_annotation_is_scalar(arg):

- return False

- return at_least_one_scalar_sequence

- return field_annotation_is_sequence(annotation) and all(

- field_annotation_is_scalar(sub_annotation)

- for sub_annotation in get_args(annotation)

- )

-

-

-def is_bytes_or_nonable_bytes_annotation(annotation: Any) -> bool:

- if lenient_issubclass(annotation, bytes):

- return True

- origin = get_origin(annotation)

- if origin is Union or origin is UnionType:

- for arg in get_args(annotation):

- if lenient_issubclass(arg, bytes):

- return True

- return False

-

-

-def is_uploadfile_or_nonable_uploadfile_annotation(annotation: Any) -> bool:

- if lenient_issubclass(annotation, UploadFile):

- return True

- origin = get_origin(annotation)

- if origin is Union or origin is UnionType:

- for arg in get_args(annotation):

- if lenient_issubclass(arg, UploadFile):

- return True

- return False

-

-

-def is_bytes_sequence_annotation(annotation: Any) -> bool:

- origin = get_origin(annotation)

- if origin is Union or origin is UnionType:

- at_least_one = False

- for arg in get_args(annotation):

- if is_bytes_sequence_annotation(arg):

- at_least_one = True

- continue

- return at_least_one

- return field_annotation_is_sequence(annotation) and all(

- is_bytes_or_nonable_bytes_annotation(sub_annotation)

- for sub_annotation in get_args(annotation)

- )

-

-

-def is_uploadfile_sequence_annotation(annotation: Any) -> bool:

- origin = get_origin(annotation)

- if origin is Union or origin is UnionType:

- at_least_one = False

- for arg in get_args(annotation):

- if is_uploadfile_sequence_annotation(arg):

- at_least_one = True

- continue

- return at_least_one

- return field_annotation_is_sequence(annotation) and all(

- is_uploadfile_or_nonable_uploadfile_annotation(sub_annotation)

- for sub_annotation in get_args(annotation)

- )

-

-

-def is_pydantic_v1_model_instance(obj: Any) -> bool:

- with warnings.catch_warnings():

- warnings.simplefilter("ignore", UserWarning)

- from pydantic import v1

- return isinstance(obj, v1.BaseModel)

-

-

-def is_pydantic_v1_model_class(cls: Any) -> bool:

- with warnings.catch_warnings():

- warnings.simplefilter("ignore", UserWarning)

- from pydantic import v1

- return lenient_issubclass(cls, v1.BaseModel)

-

-

-def annotation_is_pydantic_v1(annotation: Any) -> bool:

- if is_pydantic_v1_model_class(annotation):

- return True

- origin = get_origin(annotation)

- if origin is Union or origin is UnionType:

- for arg in get_args(annotation):

- if is_pydantic_v1_model_class(arg):

- return True

- if field_annotation_is_sequence(annotation):

- for sub_annotation in get_args(annotation):

- if annotation_is_pydantic_v1(sub_annotation):

- return True

- return False

diff --git a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/v2.py b/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/v2.py

deleted file mode 100644

index 25b6814..0000000

--- a/backend/.venv/lib/python3.12/site-packages/fastapi/_compat/v2.py

+++ /dev/null

@@ -1,568 +0,0 @@

-import re

-import warnings